What Exactly Is Observability (for Cloud Apps)?

The Problem Most Cloud Teams Quietly Live With

If you run cloud applications long enough, you’ve probably heard this before:

“The app is slow… But we’re not sure why.”

Or:

“Users have been complaining that they see this error… We haven’t been able to reproduce it nor do we know why they see it.”

Most teams already have something in place. For example: metrics dashboards, decentralized logs (located in the app servers) and perhaps alerts (which can fire when everyone is sleeping).

Yet when an incident happens, diagnosis still takes hours. Engineers jump between tools, grep logs and rely on tribal knowledge or gut feel.

You might have monitoring but still feel blind.

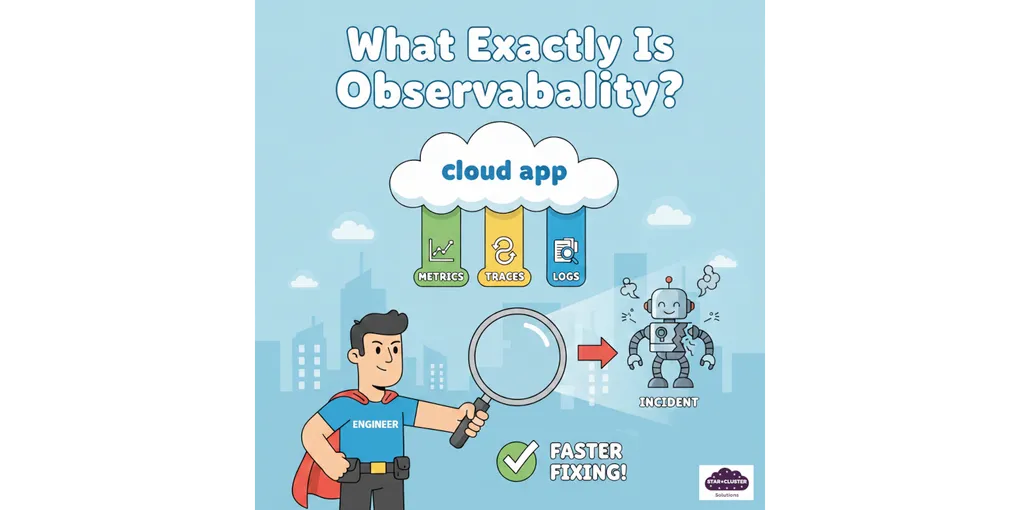

So, What Is Observability, Really?

In simple terms:

- Monitoring tells you something is wrong

- Observability helps you understand why it’s wrong

A practical definition:

Observability is the ability to understand what’s happening inside your system by looking at its outputs without needing to guess or SSH into servers.

The key difference is this: observability helps teams detect problems earlier and get to the fix faster by giving them the context needed to understand what’s actually happening. This should be applicable even when failures don’t look like anything they’ve seen before.

Why Monitoring Alone Breaks Down in the Cloud

Traditional monitoring worked well when systems were monolithic, predictable and slower to change. Modern cloud systems are none of those things.

Today’s apps involve:

- Microservices and APIs

- Async queues and background jobs

- Third-party dependencies

- Containers that appear and disappear

In the cloud, failures don’t occur in silo. In my own experience, they combine in ways you didn’t predict. When an upstream app (that many other apps rely on) fails, many error logs and alerts will likely be generated. Your monitoring dashboard will tell you a lot of things are wrong but it is hard to pinpoint down unless you have an experienced Devops or Site Reliability Engineer looking at it.

Sometimes, a minor hardware limitation can also cause many failures in different apps. I have once experienced an app (on a Kubernetes cluster) that was consuming a lot of memory on a shared hardware causing other apps to fail due to lack of memory. It can be argued that there was misconfiguration. But, the reality is no one has predicted the app to consume that much memory. The team I worked with in the past managed to solve this quite quickly thanks to having good observability that pinpointed the problematic app fast enough.

The Three Building Blocks (Without the Jargon)

You’ll often hear about “the three pillars of observability”. Here’s the practical version:

Metrics – Is something off?

- Latency

- Error rates

- Resource usage

Great for detection, weak for explanation.

Logs – What happened?

- Events

- Errors

- Contextual messages

Useful, but overwhelming without structure.

Traces – Where did the time go?

- A single request flowing through multiple services

- Shows which dependency actually caused the slowdown

Observability isn’t about collecting all three. It’s about using each tool for what it’s good at: metrics catch problems, traces show where they live, and logs explain the details. The hard part is doing this fast enough to matter.

A Simple Incident Walkthrough

Imagine this scenario:

- An alert fires. You find that checkout latency has increased.

- Metrics show it started right after a deployment.

- A trace reveals slow calls to the payment service.

- Logs confirm timeouts from a third-party API.

Instead of guessing, you know what broke, where it broke and why it broke. Observability turns “we think it’s X” into “we know it’s Y.”

What Good Observability Looks Like in Practice

Good observability doesn’t mean perfect dashboards. It usually shows up as:

- Faster incident resolution

- Less finger-pointing between teams

- Shorter, smaller outages

- More confidence during deployments

Mature teams don’t panic less because they’re smarter. They panic less because they can see clearly.

What’s the ROI of Improving Observability?

The return rarely comes from “saving money on tools”. It comes from time, risk and people.

Faster Incident Resolution

A realistic shift many teams see:

- Before: 3-8 hours to find root cause

- After: 30-60 minutes

If 3 engineers are involved and incidents happen twice a month, that’s easily thousands of dollars saved monthly in engineering time alone.

Reduced Business Impact

Shorter incidents usually mean:

- Fewer failed transactions

- Fewer support tickets

- Less customer trust erosion

The goal isn’t zero incidents. It’s smaller blast radius and faster recovery.

Build Trust in Your System

With visibility into what’s actually happening:

- Teams feel confident deploying changes

- Customers see faster resolution (improves perception of reliability)

- Leadership can make infrastructure decisions based on data, not hunches

Lower Risk and Better Decisions

With actual visibility:

- You catch problems in staging before production

- Changes roll out with confidence, not anxiety

- Teams make infrastructure bets on data instead of assumptions

The ROI often appears well before observability matures.

Common Misconceptions That Hold Teams Back

Here are a few misconceptions that delays the maturing of observability in an organization:

- “We already have logs, so we’re observable”

- “Observability is just expensive monitoring tools”

- “We’ll do it later, when we’re bigger”

In reality:

Observability is primarily a design and usage problem, not a tooling problem.

Many teams spend more money because they lack clarity.

You Don’t Need to Be Perfect to Start

You don’t need to instrument everything. You can start with:

- Critical user journeys

- High-impact services

- Questions you actually ask during incidents

Small, intentional steps usually deliver value surprisingly fast.

Final Thoughts

If your team has monitoring but still feels blind during incidents, you’re not alone.

Most teams don’t need “perfect observability”. They just need clearer signals and better questions.

If you want a second opinion on where a small observability effort could deliver the biggest return, without exploding cost, feel free to reach out. We help teams design practical, cost-aware observability setups that actually get used.